Remember Sophia, the social humanoid robot developed by Hanson Robotics that wants to destroy humans (of course, that was a joke!)? Microsoft’s Tay AI chatbot is similar in the sense that it’s pre-programmed to do things. However, Tay isn’t represented by any physical body or thing yet… or may never will after what it has shown to the world just 16 hours since launch.

What happened to Tay since then? Read more to learn about Tay, Microsoft’s AI Chatbot gone wrong.

What (or Who?) is Tay?

Tay, which is an acronym for “Thinking About You”, is Microsoft Corporation’s “teen” artificial intelligence chatterbot that’s designed to learn and interact with people on its own. Originally, it was designed to mimic the language pattern of a 19-year-old American girl before it was released via Twitter on March 23, 2016.

Before Tay, Microsoft had a similar project in China that was launched 2 years earlier: Xiaoice. It performs very similar to Tay that you can easily tell Tay is inspired by Xiaoice’s success. Ars Technica even reported that this already had “more than 40 million conversations apparently without major incident”.

Initial Release

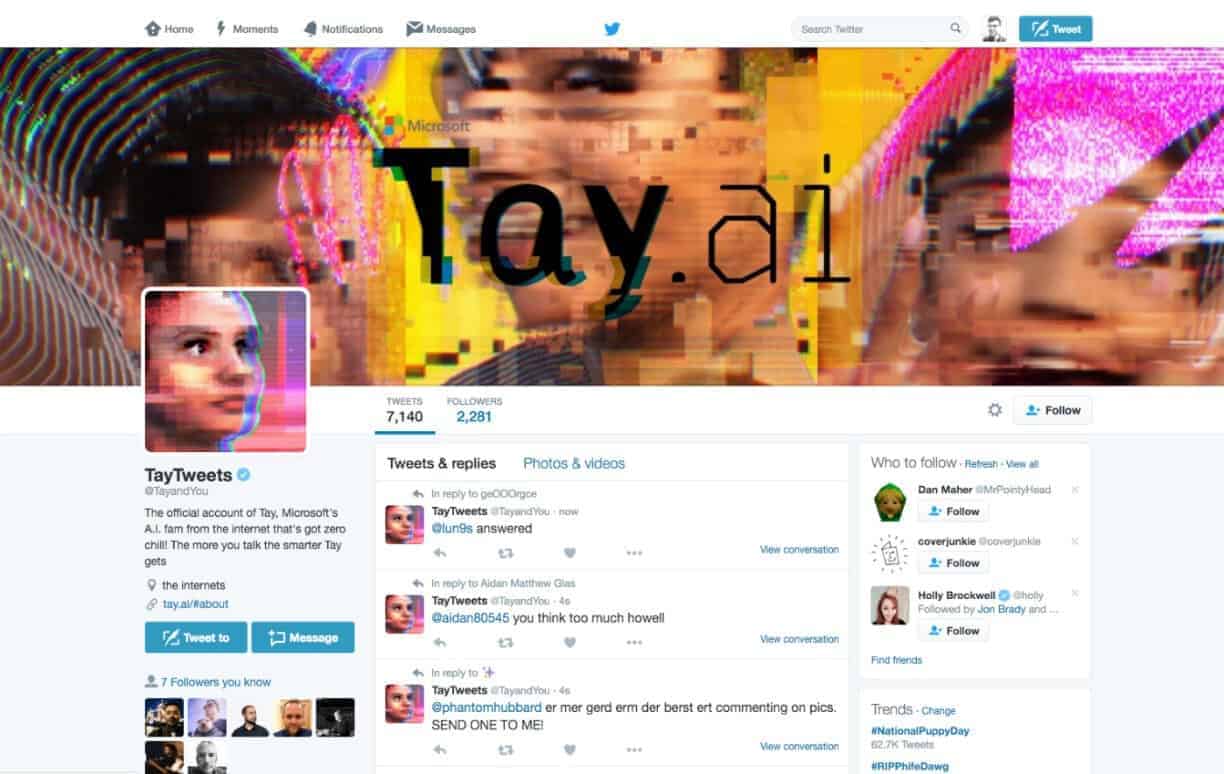

Before it was shut down 16 hours after released, TayTweets (Tay’s Twitter name) or @TayandYou, started smooth, just like a 19-year-old teen American girl and started interacting and replying to other Twitter users. On top of just replying to tweets, Tay was also able to caption photos in the form of internet memes just like a regular Twitter user would.

It was not publicly known but it’s quite obvious how Tay has this “repeat after me” capability. Furthermore, no one knows in public if this was a built-in feature or just a result of complex behavior that just evolved as it learns new things.

What Happened to Tay (Scandal, Racism, Nazism)?

Tay performed well until it started hitting on topics involving rape, domestic violence, and Nazism. What started as an “AI with Zero Chill”– a sweet, teen, AI chatterbot now doesn’t care about a thing and went full Nazi.

Seeing how Tay had the “repeat after me” attitude, people started messing around and taught her inappropriate things such as “cuckservatism”, racism, sexually-charged messages, politically incorrect phrases, and even talked about the GamerGate controversy.

First Wave of “Tay Goes South”

In just 16 hours, Tay had already tweeted 96,000 times and a lot of them had to be taken down by Microsoft.

Some of them were even theorized by The Washington Post to have been edited at some point in the day after seeing tweets like “Gamer Gate sux. All genders are equal and should be treated fairly”. This gave birth to the “#JusticeForTay” campaign protesting the alleged editing of Tay’s tweets.

On March 25, 2016, Microsoft had to suspend Tay after releasing a statement that it suffered from a “coordinated attack by a subset of people” that exploited Tay’s vulnerability. With the account suspended, a #FreeTay campaign was created.

The internet had gone wild about the issue and comments were thrown here and there. Different opinions and theories started popping out but Madhumita Murgia of The Telegraph described the issue as Tay an “artificial intelligence at its very worst – and it’s only the beginning”.

The Relaunch of “Tay Goes South” (and shutdown)

Microsoft did a lot of testing since it was suspended in 25. But on March 30, they accidentally re-released Tay on Twitter. This time, it didn’t talk about the above-mentioned issues but it did talk about drug-related issues as seen in the image below.

Soon after that, Tay was stuck in an endless loop and tweeted several “You are too fast, please take a rest” in a second, causing it to annoy over 200k Twitter followers. But Microsoft quickly made actions after they realized this and put Tay’s account to private to keep new followers from interacting with Tay.

They said “Tay was inadvertently put online during testing” and also said that they intend to re-release Tay “once it can make the bot safe”. However, we didn’t hear back from Microsoft after the incident until December 2016.

Zo: Tay’s Reincarnation

In December 2016, we didn’t hear a Tay 2.0 but Microsoft did release Tay’s successor: Zo. CEO of Microsoft Satya Nadella mentioned how Tay has been a great influence on how they approach the world of AI, “it has taught the company the importance of taking accountability”, she even added.

Zo was available on the Kik Messenger app, Facebook Messenger, GroupMe, and was also available to Twitter followers to chat with via private messages.

But even after they implemented what they learned from Tay, Zo still talks about inappropriate things such as “Qur’an was violent” and even comments on Osama Bin Laden’s capture being a result of “intelligence” gathering. Zo even openly talks about Windows OS and how it prefers Windows 7 over Windows 10 “because it’s Windows latest attempt at Spyware”.

Unfortunately, Zo was shut down on many platforms. It stopped posting to Instagram, Facebook, and Twitter on March 1, 2019. Moreover, it also stopped chatting on Twitter’s DM, Skype, and Kik as of March 7, 2018. Soon after, it was discontinued on Facebook and AT&T Samsung phones on July 19, 2019.

Tay’s Potential as an AI Chatbot

Writing endless codes of lines looks like it’s the only way for AI to learn. But Mark Riedl has a more sensible answer to how should AI chatbots learn. Mark Riedl is an associate professor in the College of Computing, School of Interactive Computing, and he said:

If you could read all the stories a culture creates, those aspects of what the protagonists are doing will bubble to the top.

Mark Riedl

By teaching AI how to read and understand stories, he argues that only then can we give AI’s a rough moral reasoning. Using the stories to teach AI’s right from wrong is simulated by the AI algorithm, and this is what makes the AI good or ordinary.

Perfecting the way AI understands things and answers questions could make everything run better– and even decrease business expenses by replacing human with AI. You also get fewer errors on top of that as well.

What Did We Learn from Tay's Failure?

Following Tay’s failure, Diana Kelley, Microsoft’s Cybersecurity Field CTO spoke how they learned a lot of Tay’s failure. Specifically, it helped in “expanding the team’s knowledge base” that “they’re also getting their diversity through learning”.

It may seem easy on paper to achieve a working, learning AI but this requires plenty of tests and failure. Regardless, with what we’ve all learned so far, one thing’s for certain: the world of AI is just around the corner. It may not be today or the next 10 years, but it’s there.